Every growing company eventually hits the moment where the opportunities in front of you outnumber the people and dollars behind you. And someone has to decide what gets a “yes.”

I’ve been on both sides of that. In a past life, I had to make directional decisions in a fast-growing company without a great framework for making them. I was an intuitive decision-maker; gut feel, pattern recognition, reading the room. Sometimes it worked. Sometimes it didn’t.

And the tension between building something core to who you are versus chasing where the revenue is actually coming from? That’s one of the hardest things to navigate when things are moving fast.

More recently, I had the opportunity to mature the way I make decisions when we stood up a Data Practice at SEP.

The Decision That Wasn’t on a Spreadsheet

We’ve been doing data work for a long time, long before “data engineering” was a titled role on LinkedIn. But it wasn’t something we’d packaged or named. It lived inside other engagements, embedded in broader product development work.

Then a few things started converging. Customers began asking for things we hadn’t explicitly offered yet; data-centric work that went beyond what we were scoping into software projects.

The Spark

We were watching data tooling and practices mature across the industry. And AI conversations were accelerating everywhere, making data infrastructure feel less like a nice-to-have and more like a prerequisite for where things were headed.

There were a lot of directions we could’ve gone to get started. But when I look back at how we actually made the call, it wasn’t a single data point or a business case that tipped it. It was the accumulation of signals; customer pull, industry momentum, internal capability that already existed but wasn’t being leveraged and layered on top of a judgment call that said this is the right time.

Time to Decide

I realize now that it’s not so much a rubric as it is a heuristic, and I’ve learned there’s a meaningful difference.

Rubrics vs. Heuristics (and Why It Matters)

This is how I’ve defined these concepts so dust off your dictionary if you want more exact definitions.

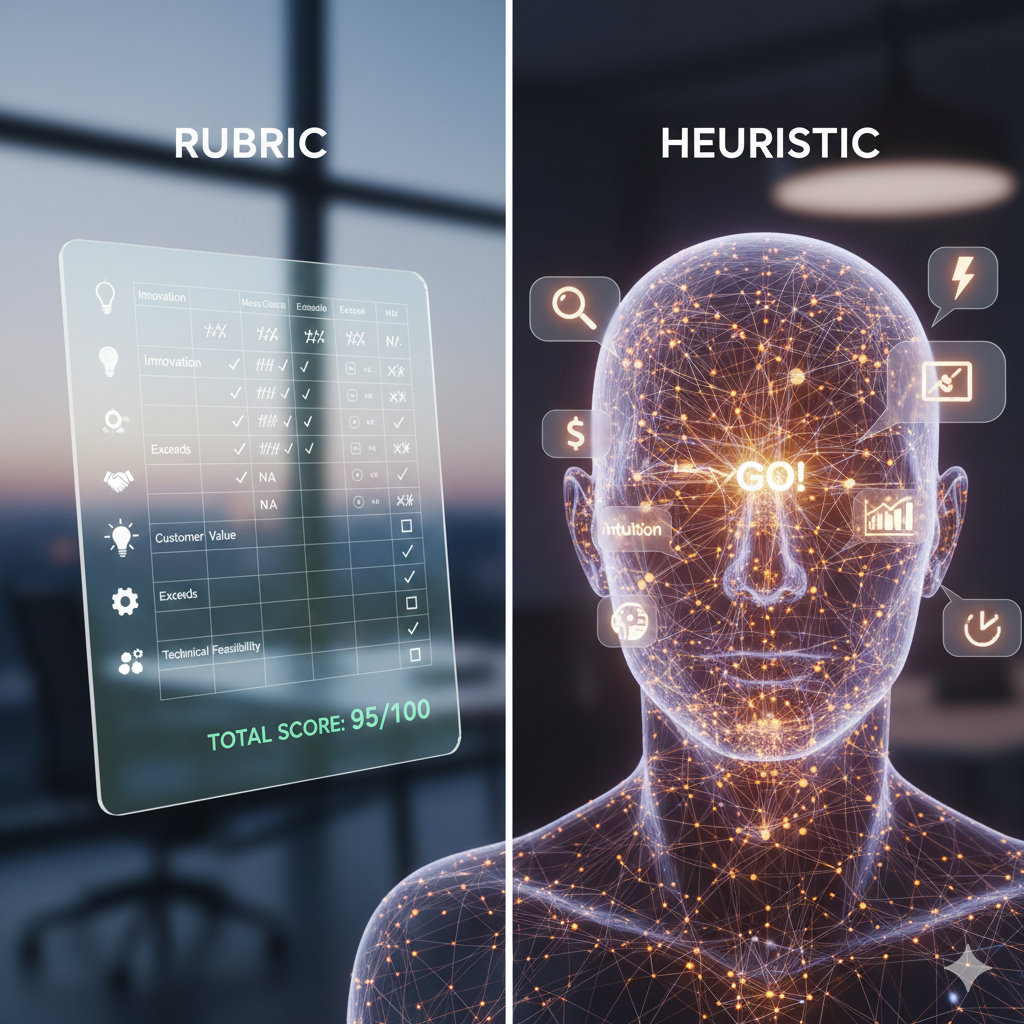

A rubric is a scoring grid. You check the boxes, tally the points, and the answer reveals itself. Rubrics are great when you have clean inputs and repeatable decisions. But most growth decisions don’t work that way, at least not the first time. I think a heuristic is a better approach in this case.

What is a heuristic?

A heuristic is a mental shortcut. It improves your odds without pretending to guarantee the outcome. It accounts for the fact that some of the information you’re working with is incomplete, some is instinct, and some of it you won’t be able to validate until you’re already in motion.

I was thinking about this distinction after a recent conversation on Behind the Product with Indragie Karunaratne, Director of Engineering at Sentry. Sentry spent years as a single-product company focused on error monitoring, before expanding into a multi-product platform. And their investment filter is elegant: Will tens of thousands of organizations use this? And does it help developers fix broken code? If both answers aren’t yes, they don’t build it.

But here’s what caught my attention – that filter didn’t come from a strategy offsite. It came from trial and error. They built products that were technically impressive but never hit broad adoption and built things that were really popular but didn’t match the mission. It took real-world attempts to distill the heuristic they now use going forward.

That honesty resonated. Because the Data Practice decision at SEP wasn’t born from a perfect framework either. It was born from paying attention, having enough pattern recognition to trust what we were seeing, and making a judgment call. The heuristic got clearer after we were in motion, not before.

The Pull of Revenue vs. The Pull of Identity

There’s another force that makes these decisions harder: money on the table.

Indragie talked about this on the show when he described the tension between Sentry’s product-led DNA and the reality that sometimes an enterprise deal shows up with a feature request that doesn’t fit the strategy. They don’t have a hard rule against it. They occasionally make exceptions.

But knowing which exceptions are strategic and which ones pull you off course is one of the hardest judgment calls in product leadership. I’ve felt that pull.

When revenue is right there, real dollars, real customers, real urgency; it takes real conviction to say “that’s not who we are.” Especially when you don’t have a crisp heuristic to point to. In those moments, gut feel isn’t a weakness. It might be the only thing keeping you from drifting into something you can’t easily undo.

But gut feel alone isn’t enough either. The informed part matters. We didn’t launch the Data Practice on a hunch.

- We had customer signal.

- We had industry momentum.

- We had internal capability.

The gut just told us it was time to connect those dots…. to pick this path over the dozen other options sitting in front of us.

Building the Heuristic While You’re Building the Thing

Here’s where I’ve landed:

The cleanest decision frameworks tend to get built after the messiest decisions. You do the hard thing, you learn what worked and what didn’t, and then you have a heuristic worth naming.

Sentry’s two-question filter is a great example. So is our experience with the Data Practice. The decision felt right at the time, and the early results have validated it. But if I’m being honest, I’m still developing my heuristic for guiding these kinds of decisions. I can describe the ingredients: customer pull, market timing, internal readiness, a judgment call on where to start; but I haven’t distilled it into something as clean as two questions.

And maybe that’s okay. Maybe the real skill isn’t having the perfect heuristic. Maybe it’s developing the instinct to know when you have enough signal to move, and the honesty to course-correct when you don’t.

The heuristic gives you structure. The gut gives you timing. And the willingness to learn from both? That’s what actually builds something that lasts.

How do you make growth decisions when the data is directional but not definitive? I’d love to hear from folks who’ve had to place bets on new offerings, products, or practices — what’s your heuristic, named or not?