AI & Data Articles

Agentic Software Development Requires Good Engineering Practices

I’ve recently started working on a client project that is using Agentic AI to develop a sizable mobile application with a medium sized team. There are a couple of lessons I’m learning about how AI and architecture fit together. We have found the number of lines of code each developer generates is far more than […]

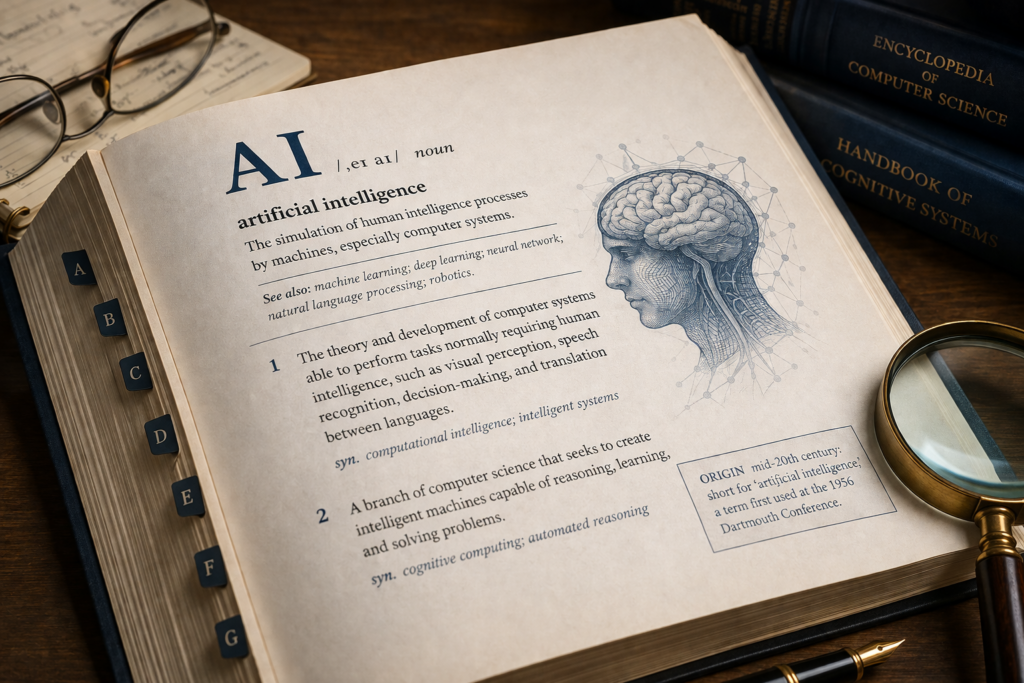

24 AI Terms Every Professional Needs to Know in 2026

AI, LLM, Context, Agent, Harness… If you can’t pin down a precise definition for any of those words, keep reading. The workplace has been flooded with new AI terminology. To add confusion, many of the words get used interchangeably. Words are important. It’s how we communicate. It’s how we make sure everyone is on the same page. So let’s start with the […]

The Workflow That Teaches Itself: A Self-Improving Agent Workflow

There’s a wave of writing right now about “harness engineering” — the idea that the infrastructure wrapped around an AI agent matters more than the model underneath it. The data is hard to argue with. Vercel deleted 80% of their agent’s tools and watched accuracy jump from 80% to 100%. OpenAI published a dedicated post […]

Treat Your AI Workflow Like You Treat Your Code

I kept having the same argument with Claude. Not a dramatic one. More like the low-grade friction of a coworker who keeps formatting the PR description wrong no matter how many times you fix it. The AI would reach for the wrong abstraction. I’d correct it. Next session, same mistake. I’d correct it again. And […]

Beyond Gold: A Platinum Layer for Wider Data Sharing

Medallion architecture (Bronze → Silver → Gold) scales well inside a governed platform—until you need to scale consumption beyond the trust boundary. Medallion Plus adds Platinum: a governed distillation layer that turns “safe to share” into a repeatable pipeline outcome, not a growing pile of one-off exports and access exceptions.

The AI Collaboration Paradox

One of our teams at SEP has gone all-in on agentic development. I don’t mean they’re simply using AI tools heavily; I mean they’re deliberately redesigning their team processes, shared infrastructure, and ways of working around it. When I was talking to the team about their experiences, one thing kept jumping out at me: AI […]

AI Agent Collaboration Starts with the Right Mental Model

You’re probably treating your AI agent like a junior developer. I know I was. It makes sense, right? The framing shows up in genuinely respected places — OpenAI’s own prompt engineering documentation advises treating GPT models like “a junior coworker” who needs explicit instructions to produce good output; engineering teams at major consultancies have characterized […]

Where AI Fatigue Actually Comes From

If you’ve spent time with agentic development, you’ve probably felt that particular flavor of mental exhaustion that hits harder than the work seems to justify. I wasn’t stuck on a hard problem. I wasn’t debugging something gnarly. I was just… keeping up. And then suddenly I wasn’t. Steve Yegge calls this the AI Vampire: the […]

The ‘Should We?’ Question: Evaluating AI Before You Commit

There is a common type of discussion happening at lots of companies right now. It may start when a competitor ships an AI feature, when a key stakeholder asks about the AI strategy, or when someone returns from a conference excited about what AI could do. The conclusion of “we need AI in our product” […]

I Had Claude Design an App via Figma Console MCP. Here’s What Happened.

I connected Claude to Figma via the Figma Console MCP and gave it one task: build an app with a scalable design system from scratch. Here’s what worked, what broke, and what it changed about how I specify design work.

Why the Best AI Agents Are Powered by a 3-Step Loop from 2022

Part 1 of the Data Governance & AI series I spent a month building an agent framework the “right” way. There were prescriptive JSON based workflows with staged pipelines, and a persistence layer for coordinating human-in-the-loop feedback. A gate review system enabled agents to validate each other’s work and loop back on failures. A debate […]

The Most Important Software Development “Tool” in the Age of AI

The software industry is rapidly evolving right before our eyes. It’s both exciting and somewhat terrifying to witness. Paradigm shifts are often uncomfortable, but we know that AI tooling is here to stay, so we need to make the most of it — in a responsible manner. It’s very easy to tell a coding agent […]